Data centers aren't the (only) problem. How we build has always been broken.

We've known how to innovate responsibly. We've just consistently chosen not to. Until someone makes it painful or profitable enough, nothing changes.

In 2021, with the world still locked inside and my disposable income running out of things to do, I opened a Stash investing account and dropped $100 on micro-stocks. Five dollars at a time, I picked the usual suspects: big tech, robotics, and a few companies I’d not spent much time thinking about, particularly data center developers quietly spreading their footprints across the globe. Those last ones would turn out to matter more than I understood at the time.

My investing knowledge was, generously, paltry. But one thing I’d understood since growing up in Seattle during the late 90s tech boom, coding in high school, interning at Microsoft the summer before college, was that computers weren’t going anywhere. The data had to live somewhere. The compute had to happen somewhere. That part seemed obvious. What wasn’t obvious, what none of us were really being asked to think about, was the cost.

Not the stock price. The actual cost.

Coming home to roost

I’ve been reporting on data centers for the last two years in conversations with developers, researchers, and big tech executives as host of the TED Tech podcast. What I’ve found is that we’ve landed well past the curb on this one.

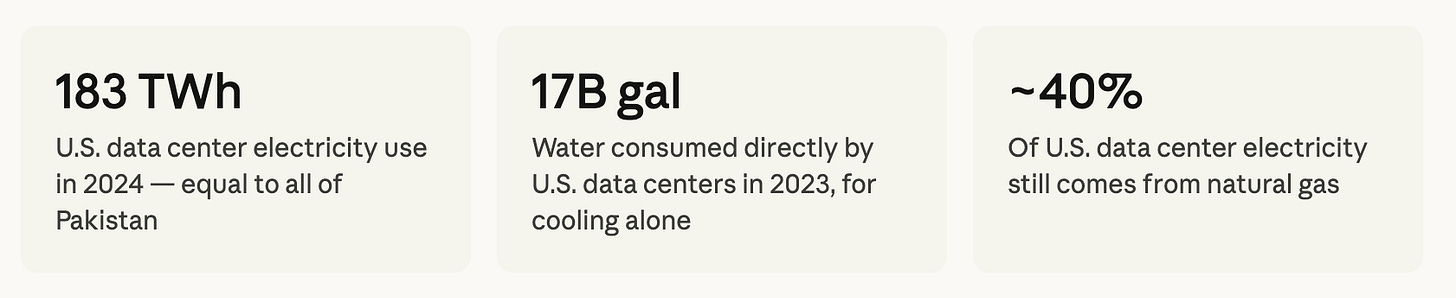

In 2024, U.S. data centers consumed 183 terawatt-hours of electricity–more than 4% of the entire country’s power supply, roughly equivalent to the annual electricity demand of Pakistan. By 2030, that figure is projected to more than double, reaching 426 TWh. Globally, the IEA estimates data centers could consume up to 945 TWh annually by the end of the decade.

Sources: IEA, Lawrence Berkeley National Laboratory, Pew Research Center (2024–2025)

Water is the quieter part of this story. A single medium-sized data center can consume up to 110 million gallons per year just for cooling; about what a thousand households use in a year. Larger hyper-scale facilities can drink up to 5 million gallons a day. In 2023, U.S. data centers collectively consumed about 17 billion gallons of water directly through cooling. Lawrence Berkeley National Laboratory projects that figure could double or quadruple by 2028. And that’s before accounting for the water used upstream by the power plants supplying their electricity. Add that in, and you’re looking at another 211 billion gallons annually.

A single Google data center in Council Bluffs, Iowa used 1 billion gallons of water in 2024, enough to supply all of Iowa’s residential water for five days. And fewer than a third of data center operators even track their water usage.

“We want the tools without the consequences. Cell phones that haven’t sacrificed child hands in the Congo for cobalt. EVs that haven’t poisoned the water table for lithium. Data centers that give us the highest computing power possible without destroying jobs, the environment, or the communities that host them. This should be a baseline. It’s not.”

We’ve been here before

This isn’t new. I’ve been covering sustainable innovation since 2010, writing for publications like Inhabitat, Black Enterprise, and Fast Company to garner my by lines thinking everyone would be interested and enthusiastic about alternatives to harming the planet. The pattern is the same every time: a technology emerges, it scales, the externalities pile up, and only after the lawsuits, the contamination reports, diseases documents, and the community organizing does anyone seriously ask whether there was a better way. Fast fashion. Single-use plastics. Mountaintop-removal coal. The supply chains behind our smartphones and our electric vehicle batteries. Could we have done it without requiring young children to mine for cobalt or lithium with their bare hands just to feed their families for the day? Or factory workers to develop cancer as a result of their daily exposure to toxic chemicals?

There’s a strange contradiction embedded in all of it. We want to think less, work less, gain more aaaand we also do not want to see humanity destroyed in the process. But we’ve consistently built technologies that force the rest of the world to absorb the cost of our convenience, then act surprised when the bill comes due.

Derek Thompson and Ezra Klein, in their book Abundance, argue that trying to get consensus from too many stakeholder groups is what stalls solutions—that the impulse toward inclusion becomes its own form of paralysis. I understand the argument. But I don’t think “move fast and figure it out later” is actually cheaper. That slogan, Silicon Valley’s creed to startup culture for the better part of two decades, produced fewer empathetic companies and more “win at all costs” products; ones that concentrated wealth at the top, extracted value from communities and workers, and generated the very distrust, backlash, and regulatory reckoning we’re now wading through today. The bill for “moving fast and breaking things” always arrives. It just gets sent to someone else first.

What’s expensive is building a data center, then spending years retrofitting it when the community sues, the utility can’t supply the load, or the water authority pulls the permit. It seems far more economical to design with reduced water and energy use as baseline requirements than to engineer backward from a hot mess communities are forced to clean up and large companies send PR teams to repair for the sake of their images.

Why we still haven’t learned to build with the end in mind

The question I keep returning to, and I’ve combed through the lawsuits, sat with the developers, listened to the executives opine on infrastructure, is this: why have we still not learned to innovate with the end in mind?

Not the dystopian end. Not the Elon version where a select few escape to Mars while the rest of us figure out what to do with a warming planet. I mean the practical end. Who gets affected? Which jobs disappear and which appear? How do we prepare the population for the work ahead to sustain their families and livelihood? What’s the power source? How do you use less water? How do you make it safe? Who is missing from the conversation and from the data?

The answers aren’t science fiction. Models exist. Self-healing concrete (I actually just recorded an episode on this for TED Tech that will drop in a few weeks). Direct-to-chip cooling that dramatically cuts water use. Closed-loop cooling systems that recirculate rather than evaporate. Microsoft announced in 2024 a goal to operate a zero-water evaporation data center. Companies like Soluna are already running data centers on renewables. Rewiring America is constructing a testing model where solar panels and battery storage installed in a neighborhood become the power source for a local data center, which then buys the power back from the homeowners. The infrastructure already in the community becomes the infrastructure powering the center.

These aren’t niche experiments. They’re proof that another way exists. We just haven’t made it the default.

The EV lesson

Here’s what gives me cautious hope. Tesla didn’t just build electric cars. It forced the entire auto industry to move. Every major manufacturer had to put their foot on the accelerator (pun very much intended). The result: EV sales topped 17 million globally in 2024, crossing 20% of the world car market for the first time. In 2025, the International Energy Agency projects more than 1 in 4 cars sold worldwide will be electric. China is approaching half. That shift happened within a decade.

It didn’t happen because everyone agreed it was the right thing to do. It happened because someone built a product people wanted, proved it was viable at scale, and made the old model look embarrassingly obsolete by comparison. The market followed. Policy followed. Investment followed.

Data centers need their Tesla moment. And honestly, the economics are already pointing there. The investors who poured capital into green technologies and materials over the last decade, an often overlooked, often underfunded market category, are starting to look prescient. Sustainable design isn’t idealism anymore. It’s a competitive advantage, a liability hedge, and increasingly, a condition of doing business at all. That shift creates real openings: for workers looking for their place (and a bit of stability) in an AI-driven world, for entrepreneurs willing to out-innovate those doing business as usual, and for companies smart enough to realize that the next decade rewards whoever figures out how to do this right first.

Stop being lazy about it

We’ve decided, for a long time now, that the cheaper and more polluting approach is better because a contract and a money opportunity said so. That calculus is changing. The communities where data centers want to build are organized, litigious, and paying attention to their water bills. The utilities supplying power are strained. In parts of the mid-Atlantic, data center demand has already pushed residential electricity rates up 20%.

The polarization keeps us stuck. One side says any friction around development is anti-progress. The other treats every new project like a crisis. But between “build it and apologize later” and “don’t build it at all,” there is an enormous middle: build it right, from the beginning, with the people and places it will affect in the room from day one. This is what good leadership looks like.

I’m not naive about how rare that is. But I’ve seen it happen. And I think we’re closer to a tipping point than the headlines suggest. Not because companies have suddenly grown a conscience, but because the old way is becoming genuinely, measurably expensive.

There’s always another, better, more considerate way. We just have to stop being so damn lazy about finding it.

I'd love to see you delve deeper into your experience & observations around this question:

"The question I keep returning to, and I’ve combed through the lawsuits, sat with the developers, listened to the executives opine on infrastructure, is this: why have we still not learned to innovate with the end in mind?"

I feel that I know some ways to answer this questions; but my gut tells me that you'd surprise me. Is it just economics? Is it just that the system is set up to privilege status-quo supply chain decision? I truly believe that some of the very folks promoting innovation at the expense of people and environment know and feels that it's wrong. Is greed the main thing that gets them to ignore or avoid those considerations?

People often point to the success of Tesla often forget about the EV-1. This was an electric car from two decades before. By all accounts drivers who had them loved them unfortunately GM on leased them. Then when congress made owning an SUV cheaper by allowing them to be classified as work vehicles and depreciated over 3 years, the die was cast and GM recalled and shredded the EV-1s. So the knowledge was there but the incentives got twisted and perverse. I’m sure big oil had a major influence.

One of our hopes going forward could be to challenge all new designers to think like the Fremen from Dune. They literally conserve every drop of water, to the point of “reclaiming” someone’s water when they die. We need that type of approach to water usage to get people to engineer a better way. Help me spread the word and challenge people to “Think like a Fremen.”